Welcome to The Logical Box!

For leaders who want AI to help, not add more work.

Hey,

If this is your first time here, welcome. The Logical Box is a weekly newsletter for owners and leaders who want AI to reduce real work, not add new work. Each issue focuses on one idea: see where work breaks down, fix the clarity first, then add AI where it actually helps.

Before this week's issue, I want to share something with you directly.

I started The Logical Box around the end of 2024. Early on I tried a news format, and in January 2025 I shifted to what this became: a weekly training-style brief focused on clarity, systems, and practical AI adoption. I believed in the format. I still do.

But after twelve months of consistent work without the growth I needed to keep going at this pace, I am putting The Logical Box on pause after this issue.

I do not know yet if this is temporary or permanent. I am being honest about that because you deserve honesty more than you deserve a clean ending I cannot guarantee.

What I do know is this: the ideas in this newsletter are real. Unclear work is still the reason most AI initiatives fail. That problem is not going away, and neither am I.

If you want to stay connected, find me on LinkedIn. If The Logical Box comes back in some form, you will hear about it there first.

Thank you for reading. Now, on to this week.

This Is Happening in Your Industry Right Now

You approved an AI project.

The team ran a pilot. It produced something usable. A few people were genuinely impressed.

Then the weeks passed. Then a quarter. The pilot is technically still active, but it is not going anywhere. Nobody shut it down. Nobody scaled it. It just lives in a kind of organizational holding pattern.

If you have been in that situation, you are not alone. And if you think the problem is the tool, this issue is for you.

The Numbers Show How Widespread This Problem Is

According to S&P Global Market Intelligence, the share of companies abandoning most of their AI initiatives jumped from 17% in 2024 to 42% in 2025.

The average organization scrapped 46% of its AI proof-of-concepts before they ever reached production. That means nearly half of all the AI work getting done right now never makes it into the hands of real teams doing real work.

That is not a technology failure. It is a clarity failure. |

The tools worked well enough in the pilot. The model generated the output. The demo was clean. But when it came time to plug the AI into the actual day-to-day work, something fell apart. And most teams could not name exactly what.

Source: S&P Global Market Intelligence, Voice of the Enterprise: AI and Machine Learning, Use Cases 2025 - spglobal.com

Here Is What the Breakdown Actually Looks Like

Picture a team that just ran an AI pilot for writing client summaries. The model did a decent job. It pulled the key points, organized them cleanly, and saved each person about twenty minutes per summary.

But three months later, the team is not using it consistently. Some people use it some of the time. Others went back to doing it manually. And nobody can explain why it did not stick.

If you looked closely, here is what you would find.

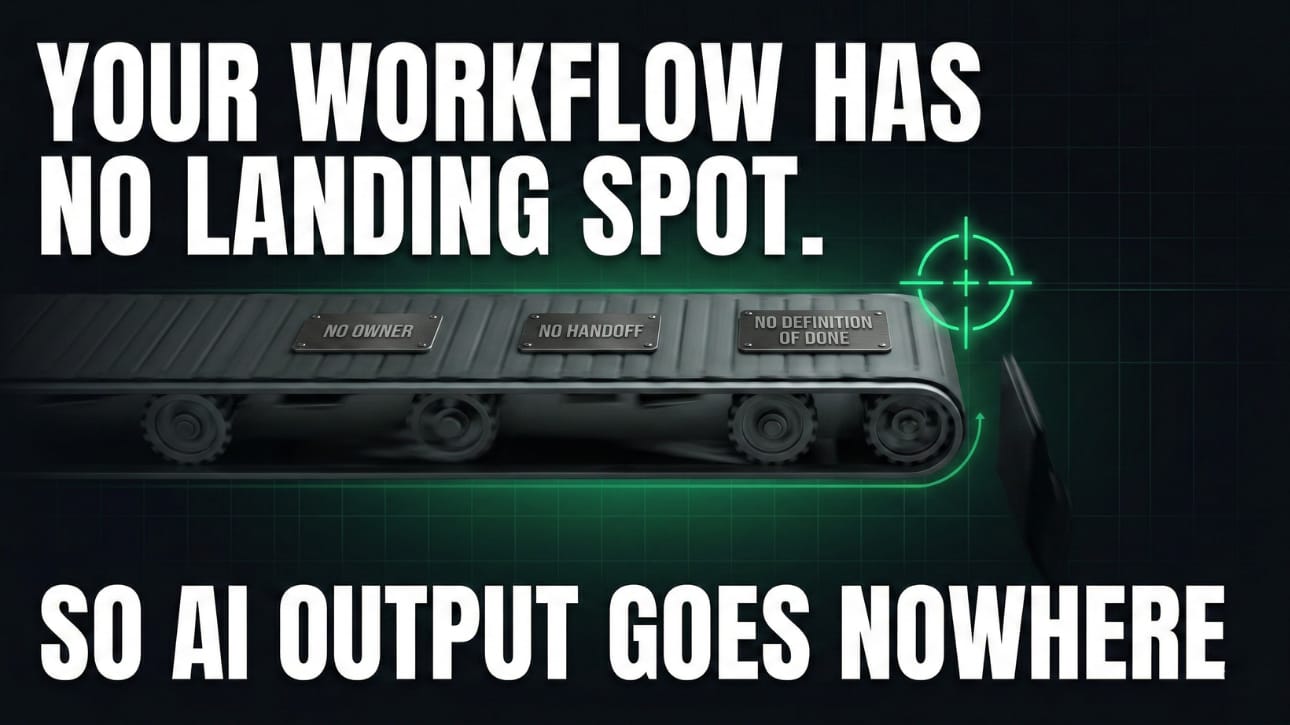

The pilot was built to prove AI could do the task. Nobody built the workflow that would receive it. |

There was no agreed definition of what a finished summary looked like. There was no named person responsible for the quality of the output. There was no clear step for who reviewed the AI draft before it went to the client. And when the AI produced something off, nobody knew whose job it was to fix it.

So the tool worked. And the work around the tool did not.

This is the part that surprises most leaders. They assume the workflow will figure itself out once AI is in the picture. It does not. AI takes whatever is already happening and runs faster. If the work is clear, AI speeds up clarity. If the work is fuzzy, AI speeds up confusion.

The gaps that existed before the pilot, the unclear handoffs, the unwritten standards, the unowned decisions, were always there. AI just made them impossible to ignore.

Stalled Pilots Are More Expensive Than Failed Ones

A failed pilot is painful but clean. You learn something and move on.

A stalled pilot is the expensive kind. It lingers. It consumes time in review meetings. It drains credibility because the team can see that nothing changed after all the effort. And it makes the next AI initiative harder to launch, because people remember what happened the last time.

There is also a momentum cost. The window when a team is genuinely curious about AI is not unlimited. When you fill that window with a pilot that produces a good demo and then stalls, you close the window. Skepticism takes root, and it is much harder to uproot than indifference.

The repeat work that was supposed to be removed is still happening. The hours that were supposed to come back are not coming back. And the license is still being paid.

The Real Reason Pilots Stall: The Wrong Question Gets Asked

Most AI pilots start by trying to answer this question: can AI do this task?

That is a reasonable question. But it is the wrong starting point.

When a team runs a pilot, they are testing whether the tool produces acceptable output. Does it write a decent email? Does it summarize accurately? Does it pull the right data? If yes, the pilot is considered a success.

But there is a second question that almost never gets asked before the pilot starts:

Is our work clear enough to absorb what AI produces? |

This is a completely different question. It is not about the tool at all. It is about the work the tool is supposed to plug into.

Think of it like building a new road. You can build a road that is smooth and well-designed. But if it does not connect to the existing streets on either end, nobody can use it. The road is not the problem. The connections are.

AI output is the road. The workflow around it is the street system. If the street system is unclear, the road goes nowhere.

For AI to move from a pilot to production, three things have to be true at the same time. Someone has to own the output. The definition of done has to be written down. And the step after the AI produces something has to be mapped and assigned.

When those three things exist, pilots scale. When they are missing, pilots stall. Every time.

Five Questions That Tell You If a Workflow Is Ready for AI

Before you run a new AI pilot, or before you try to revive one that has gone quiet, work through these five questions. Write the answers down. Do not guess. If you cannot answer a question clearly, that is your clarity gap. That is where to start.

Spend thirty minutes on this. It will save months.

Question 1: What does done look like for this task?

This is the most skipped question in AI adoption. Teams focus on what AI will do and never write down what the finished output needs to look like.

Done is not "AI writes a draft." Done is a specific, describable result. What does the output include? What format is it in? What standard does it meet before it moves to the next step? Write that in one sentence. If you cannot, the task is not defined clearly enough yet.

Question 2: Who is the single person responsible for this output?

Not the team. Not the department. One person. When the AI produces something incorrect or incomplete, who is accountable for catching it and fixing it?

When nobody owns the output, everyone assumes someone else is watching it. That is how wrong information moves downstream without anyone stopping it. Name the person before the pilot starts.

Question 3: Where does the AI output go after it is produced?

Map the next step. Not in general terms. Specifically. Who receives the output? What do they do with it? What decision depends on it? How does it connect to the next piece of work?

If you cannot draw that path in two or three steps, the handoff is undefined. AI output that lands without a clear next step creates confusion, not speed.

Question 4: What is the plan for when the output is wrong?

Not if it is wrong. When it is wrong. Every AI system produces incorrect output at some point. The difference between teams that handle it well and teams that abandon the tool is whether there is a plan before the first mistake happens.

Who reviews the output before it reaches the end of its path? What do they check for? What happens if something looks off? Is there a step for that, or does incorrect output move downstream unnoticed? Name the safeguard now.

Question 5: What does this workflow look like right now, without AI?

Draw the current process in five steps or fewer. Not the ideal version. The actual version. What happens first, what happens next, and where does the work end up?

This step matters because AI does not create workflows. It plugs into them. If you cannot draw the existing process in five steps, the work is not stable enough to add AI yet. Fix the process first. Once it is clear and repeatable, AI has a real place to land.

If you can answer all five cleanly, you have a workflow ready for AI. If any answer is blank, that blank is your real problem. Start there. |

One Question to Ask Before Any AI Decision Gets Made

Bring this into your next team meeting or project review. Ask it out loud before approving or continuing any AI initiative:

"What is the handoff step where AI output enters the work, and who owns that handoff?" |

If the room goes quiet, or if three different people give three different answers, the workflow is not ready. That is not a reason to cancel the project. It is a reason to spend an hour getting the handoff defined before moving forward.

That one hour of clarity work will do more for the project than another round of prompt testing.

The One Thing to Remember From This Issue

AI does not fix unclear work. It runs faster inside it.

The pilots that make it from proof-of-concept to real production are not the ones with the most advanced model. They are the ones where someone, before the pilot launched, took time to define done, name an owner, and map the step after the AI output arrives.

That preparation takes a few hours. Skipping it costs months of stalled work, slow momentum, and teams that stop believing AI can help them.

Fix the work first. Then add AI.

Try this FREE worksheet below to help you through the process.

If you want help working through these five questions on a real workflow inside your business, that is exactly what we build inside AI Clarity Hub.

Build your skills here!

I launched AI Clarity Hub, a private space for owners and leaders who want AI to reduce real work, not add new work.

Inside the hub, we work through exactly what I described today: finding the clarity gaps, building simple systems, and only then adding AI where it actually helps. Members get access to live training sessions, ready to use templates, and a library of AI assistants built for real business workflows.

Thanks for reading,

Andrew Keener

Founder of Keen Alliance & Your Guide at The Logical Box

Please share The Logical Box link if you know anyone else who would enjoy it!